Microsoft Edge opens AI-upscaled video to AMD graphics cardsĪlthough Nvidia often claims the GeForce 256 was the world’s first GPU, that’s only true if Nvidia is the only company that gets to define what a GPU is. Here’s how you can get The Last of Us for free from AMD Nvidia may be putting an end to RTX 30-series graphics cards > Another question: has anyone done benchmark comparison for 3D rendering in Chimera between a top of the line Geforce GTX card and a top Quadro card? Is there a strong improvement with the Quadro, which might justify the price gap?

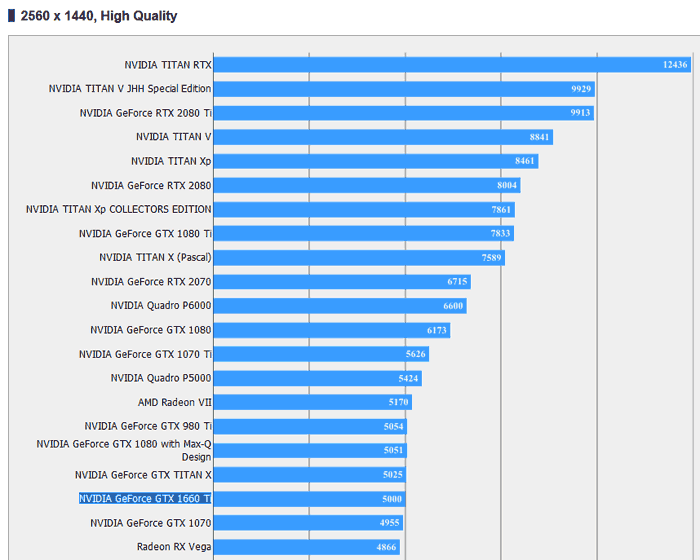

> Can chimera make use of more than one GPU for 3D rendering? We have 3 Geforce GTX 780 Ti (mainly used for number crunching) on a particular workstation and would be interested to make full use of them for rendering tomograms or serial block face imaging data. Here are Chimera benchmarks for a range of graphics cards: In general I think the Geforce cards perform better (fewer bugs, faster speed) than the Quadro cards, and would only recommend Quadro if you use stereoscopic display with shutter glasses. If you explain exactly the case where you see slow rendering I can perhaps advise. When you first load a big map it can take a long time because disk drive speed is slow - a solid state drive helps speed this up. This all relates to the speed rotating the model. If the data does fit on the graphics card, it usually renders at full frame rate (60 frames per second), although that isn’t true of all graphics cards. I think slow rendering of maps in solid (grayscale) style typically happens in Chimera when the map data doesn’t fit on the graphics card, so every frame it has to transfer all the data to the card - the performance plummets once you get a map that big. I have my doubts that this will improve Chimera rendering speed for large density maps. From what I understand they do thing like have each of 2 cards render every other frame - since the graphics are pipelined the current frame and next frame could be rendered simultaneously possibly doubling the speed.

With Nvidia cards this technology is called SLI and with AMD cards it is called CrossFire. I don’t think applications need any special support to use multiple cards - the system graphics driver distributes the work across 2 or more cards. I don’t know if it is likely to improve rendering speed.

I haven’t tried Chimera with 2 or more graphics cards working together. Multi GPU rendering Tom Goddard goddard at

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed